Why AI In Digital Transformation Matters Now

AI is now a core part of digital transformation for enterprises. It helps teams automate repeated work, analyze large volumes of data, and respond faster to customers and market changes. Recent enterprise research shows AI use is now common across business functions, but many organizations still struggle to scale it well.

AI in digital transformation helps enterprises automate work, improve decisions, and deliver better customer experiences. It turns data into faster action and reduces manual effort across teams. When used in real workflows, AI supports growth, efficiency, and stronger operational control.

Digital transformation is the use of digital technology across an organization to improve processes, products, operations, and customer outcomes. AI strengthens that shift by making systems more adaptive, predictive, and useful in daily work. IBM defines digital transformation as a business strategy that modernizes operations across the organization, while IBM also describes AI transformation as the integration of AI into operations, products, and services to drive efficiency and growth.

That is why AI is no longer seen as a side experiment. For many enterprises, it is becoming part of how work gets done. It supports customer service, operations, planning, cybersecurity, and collaboration. A local example is Convay, which promotes AI-powered meeting minutes, transcription, and collaboration features as part of enterprise communication workflows.

How AI And Digital Transformation Connect

Digital transformation creates the foundation. AI adds intelligence to that foundation.

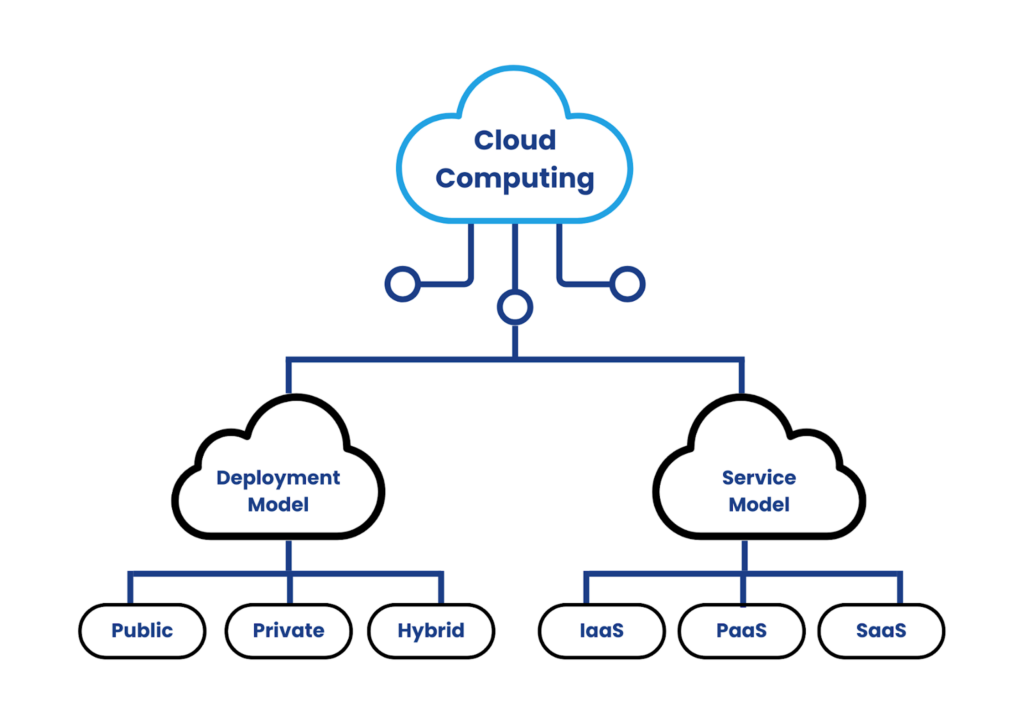

Most enterprises already use digital systems such as cloud tools, CRMs, ERPs, support platforms, analytics dashboards, and collaboration software. AI becomes useful when it improves those existing systems. It can sort information faster, detect patterns earlier, and help teams act with less delay. That is why AI in digital transformation works best when it is tied to real business processes instead of being treated like a separate trend.

This matters because many organizations are still learning how to move from isolated AI use cases to enterprise-wide value. McKinsey’s 2025 survey found AI use is widespread, but scaling practices such as governance, validation, roadmaps, and KPIs are still uneven. That means the opportunity is real, but execution still decides results.

How AI Improves Customer Experience

Customer experience is one of the clearest areas where AI creates value. Modern customers expect faster answers, more relevant service, and smoother support across channels.

AI helps enterprises meet those expectations through chatbots, virtual assistants, recommendation systems, and language tools. Natural language processing allows machines to understand and generate human language, which is why it powers many support and service experiences today. When used well, these tools reduce wait times and help teams personalize communication based on past behavior and current need.

This does not mean every customer interaction should be handed to automation. It means enterprises can use AI to handle repeatable requests, guide users faster, and give human teams more time for complex cases. That balance is often where customer experience improves the most.

How AI Raises Operational Productivity

Operational productivity has always been a core goal of digital transformation. AI helps by reducing manual work and speeding up decisions inside existing workflows.

Many enterprise processes still involve repetitive tasks such as data entry, document handling, routing, scheduling, ticket classification, and status updates. AI can automate parts of that work and reduce the burden on teams. Microsoft’s enterprise IT case studies describe AI as improving reliability, resiliency, and efficiency across internal operations, which is a strong example of how AI can support daily enterprise performance.

This is where AI in digital transformation becomes practical. The value is not just in saving time. The bigger gain often comes from fewer delays, better consistency, and more focus for people doing higher-value work.

How AI Supports Data Driven Decision Making

Enterprises generate data every day from customers, transactions, systems, suppliers, and internal teams. That data is valuable only when it can be turned into useful action.

AI helps process large volumes of structured and unstructured data faster than manual methods. Machine learning models can identify patterns, support forecasts, and help leaders make decisions with more context. IBM defines machine learning as the part of AI focused on learning from data patterns and making predictions or inferences without hard-coded instructions.

This makes AI especially useful for forecasting demand, monitoring performance, detecting anomalies, and improving planning. It does not remove the need for human judgment. It improves the speed and scale of analysis so teams can decide with better visibility.

How AI Creates Space For Innovation

AI does more than improve current work. It also creates room for new ideas.

When enterprises reduce repetitive tasks and improve visibility across operations, they free up time and budget for experimentation. Teams can test new service models, improve products faster, and respond to changing customer behavior with more confidence. AI can also support market analysis, product discovery, and content generation during early planning stages, which makes innovation cycles shorter and more practical.

This is one reason AI in digital transformation matters at the strategy level. It is not only about efficiency. It is also about giving enterprises more capacity to adapt, test, and improve.

How AI Strengthens Employee Performance

Many people still ask whether AI will replace employees. In most enterprise settings, that is the wrong question.

The more useful question is how AI changes the kind of work people do. Research and enterprise guidance increasingly frame AI as a tool for augmentation, not just replacement. IBM states that AI should enhance human intelligence with oversight, agency, and accountability, while Microsoft has described AI as a way to improve employee productivity and engagement.

That makes employee empowerment an important part of enterprise AI adoption. When AI handles repeatable tasks, people can spend more time on problem-solving, planning, relationship management, and creative work. The technology works best when teams are trained, supported, and given clear rules for how to use it well.

How AI Improves Cybersecurity And Risk Awareness

As enterprises digitize more of their work, the attack surface grows. That is why security and risk management must grow with it.

AI can support cybersecurity by monitoring activity, spotting unusual behavior, analyzing large volumes of signals, and helping security teams respond faster. Microsoft explains that AI for cybersecurity helps automate threat detection, identify patterns, and support real-time incident response. IBM also notes that AI tools can monitor for abnormalities in data access and alert teams to possible threats.

At the same time, AI introduces its own risks. NIST’s AI Risk Management Framework says AI risk management should be integrated into broader enterprise risk management. That means enterprises should not only ask what AI can improve. They should also ask how models are governed, validated, monitored, and controlled.

Common AI Solutions Enterprises Use

Enterprises usually do not adopt one single type of AI. They adopt a mix of capabilities that solve different problems across the business.

Machine Learning

Machine learning helps systems learn from data and improve over time. It is useful for forecasting, pattern recognition, demand planning, scoring, and classification.

Natural Language Processing

Natural language processing helps machines understand and generate human language. It is widely used in chatbots, search, summarization, transcription, and language-based support tools.

Computer Vision

Computer vision helps machines process and interpret images and video. It is commonly used in inspection, recognition, monitoring, and visual analysis tasks.

Robotic Process Automation

RPA uses software robots to automate repetitive digital tasks. It is useful in areas like billing, onboarding, claims handling, and back-office operations.

Predictive Analytics

Predictive models use past data to estimate likely future outcomes. Enterprises use them for demand planning, risk scoring, maintenance, and performance forecasting.

AI Driven Personalization

AI helps enterprises tailor content, support, and product suggestions based on user behavior and context. This improves relevance and can reduce friction in the customer journey.

AI For Cybersecurity

AI can help detect anomalies, prioritize alerts, and support faster response across complex environments. This is especially useful when security teams must monitor large volumes of activity.

What The Future Looks Like For Enterprises

The future of AI in digital transformation will likely be shaped by scale, governance, and workflow integration.

The direction is becoming clearer. Enterprises are looking for measurable value. The importance of leadership ownership, defined validation processes, and stronger adoption practices for turning AI into real business results. Microsoft also frames AI maturity as a staged journey rather than a one-time deployment.

That means successful enterprises will not win by adding the most AI features. They will win by choosing practical use cases, integrating AI into existing systems, and keeping human oversight strong. In the coming years, the gap may grow between companies that use AI as a business capability and companies that still treat it as a side experiment.

What Enterprises Should Do Next

AI in digital transformation is no longer just an idea for the future. It is already shaping how enterprises serve customers, run operations, manage risk, and support employees.

The smart next step is simple. Start with a real business problem. Tie AI to an existing workflow. Make sure the data is usable. Set clear ownership. Keep people in the loop. Then expand only when the first use case is working well. That is how enterprises turn AI from hype into durable value.

FAQs

What is the role of AI in digital transformation for enterprises?

AI helps enterprises accelerate digital transformation by automating routine work, improving data analysis, supporting faster decisions, and enabling more responsive customer experiences. In practice, enterprise AI is now used across operations, service, analytics, and risk management rather than as a standalone tool.

How does AI improve customer experience in enterprises?

AI improves customer experience through chatbots, virtual assistants, language tools, and personalization systems. These tools help enterprises respond faster, reduce friction, and tailor support to customer behavior and context.

How does AI support data driven decision making in digital transformation?

AI can process large volumes of data faster than manual methods and identify patterns that help with planning, forecasting, and prioritization. That makes decision-making more scalable and often more timely when the underlying data is reliable.

What are the most common AI solutions used in enterprise digital transformation?

Common solutions include machine learning, natural language processing, computer vision, robotic process automation, predictive analytics, personalization systems, and AI-supported cybersecurity. Each solves a different part of the enterprise workflow.

Can AI improve cybersecurity and risk management for enterprises?

Yes. AI can help detect anomalies, identify suspicious behavior, and support faster response across networks and systems. But AI also creates new governance demands, which is why NIST recommends integrating AI risk management into broader enterprise risk management processes.

Will AI replace employees in enterprises?

In most enterprise settings, AI is being used to augment employees rather than fully replace them. The bigger challenge is usually adoption, training, and governance, which means people still remain essential for judgment, oversight, strategy, and problem-solving.